Grimlakin

Forum Posting Supreme

- Joined

- Jun 24, 2019

- Messages

- 8,247

- Points

- 113

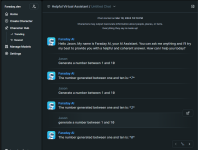

I did this in windows largely following the instructions I found here:

https://www.howtogeek.com/881317/how-to-run-a-chatgpt-like-ai-on-your-own-pc/

For the last bit when it has you use a command line to download the actual AI engine... or whatever you want to call it. It fails. If you go into Docker expand the project you can launch a web interface and download the AI engine of your choice.

Be warned... when they say this takes a LOT of CPU and a LOT of Ram they are not effing about.

I run the mid tier 13b engine and it easily can max out my 5900x if I let it have the threads. That and the Memory load pretty much eats all it can without pushing the OS over.

It was fun to setup and now know I have a built in integrated Linux VM in my windows OS.

To be fair I'm running windows 11 pro. I would suggest the same for following this path.

Now I am looking at going to 64 gigs of ram by adding another 32 gig kit to the one I have already. Price isn't bad either today.

https://www.howtogeek.com/881317/how-to-run-a-chatgpt-like-ai-on-your-own-pc/

For the last bit when it has you use a command line to download the actual AI engine... or whatever you want to call it. It fails. If you go into Docker expand the project you can launch a web interface and download the AI engine of your choice.

Be warned... when they say this takes a LOT of CPU and a LOT of Ram they are not effing about.

I run the mid tier 13b engine and it easily can max out my 5900x if I let it have the threads. That and the Memory load pretty much eats all it can without pushing the OS over.

It was fun to setup and now know I have a built in integrated Linux VM in my windows OS.

To be fair I'm running windows 11 pro. I would suggest the same for following this path.

Now I am looking at going to 64 gigs of ram by adding another 32 gig kit to the one I have already. Price isn't bad either today.